AI value in life sciences depends on context. A model that cannot safely reach trusted systems will stay trapped in generic answers. A model that can reach too much, too quickly, creates a different problem: uncontrolled access, unclear evidence, and risk that moves faster than governance.

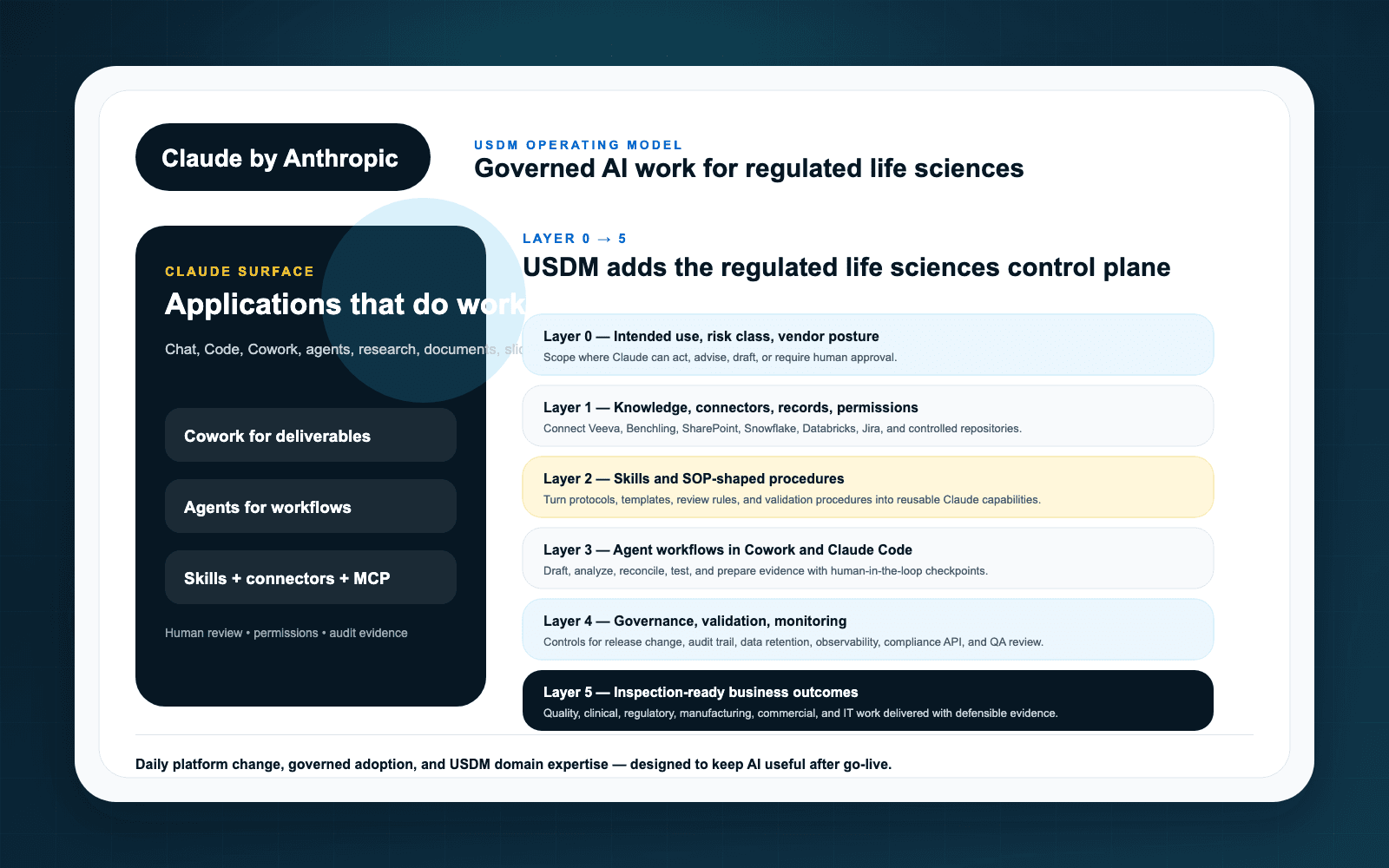

That is why Anthropic’s public product surface around Claude connectors, Claude Skills, and the Model Context Protocol matters. Together, they point toward a new integration layer for AI-assisted work. In regulated environments, that layer has to be designed like critical infrastructure.

The integration problem AI exposes

Most life sciences organizations run on a dense ecosystem of quality systems, regulatory repositories, clinical platforms, commercial systems, collaboration tools, data lakes, and document stores. Traditional integrations move records between systems. AI integrations add a new pattern: a user asks for help, and the AI needs governed context from multiple systems to respond or act.

Anthropic describes MCP as an open standard for connecting AI assistants to the systems where data lives. That is important because one-off integrations do not scale well. But standardization does not remove the need for governance. It makes governance more urgent because a reusable protocol can expand quickly across teams and tools.

Connectors bring context. Skills package repeatable work.

Connectors help Claude reach approved systems and content. Skills can package instructions, workflows, and domain-specific expertise for repeatable tasks. Used well, those capabilities can reduce one-off prompting and make AI assistance more consistent.

Used poorly, they can create hidden process variation. A skill that drafts a regulatory summary, reviews an SOP, or performs a quality triage step is not merely a productivity shortcut. It may become part of how work is performed. That means it needs ownership, versioning, testing, monitoring, and retirement criteria.

Regulated design questions

Before connecting Claude to enterprise systems, USDM recommends answering practical control questions:

- Access: Which users, roles, groups, and service identities can invoke the connector or skill?

- Scope: Which repositories, record types, fields, projects, and documents are in scope?

- Action: Is Claude reading, drafting, recommending, updating, or triggering downstream workflow?

- Evidence: What prompts, sources, outputs, and review decisions must be retained?

- Validation: What testing is appropriate for the intended use and risk level?

- Change: Who approves changes to connectors, skills, prompts, permissions, and model configuration?

A life sciences integration layer

USDM’s Claude for life sciences operating model treats connectors, MCP, and skills as the middle layers between intended use and agent workflows. That sequencing matters. If organizations jump straight to agents without disciplined context and repeatable skills, they create brittle automation with impressive demos and weak control.

A better path is staged: define the use case, connect only the approved context, package repeatable expertise into controlled skills, then evaluate whether agentic workflow is appropriate.

How to start

Start with one workflow where better context clearly matters and the risk can be bounded. Examples include inspection readiness evidence retrieval, SOP impact review, regulatory intelligence briefing, or controlled commercial enablement content. Build the connector and skill governance once, then reuse the pattern.

Key takeaways

- MCP, connectors, and skills form an integration layer for AI-assisted work.

- In regulated environments, that layer needs access governance, validation, evidence, and change control.

- Skills should be owned and versioned like controlled process components when they support regulated work.

- USDM can help design the operating model through an AI Readiness Assessment and Claude governance roadmap.