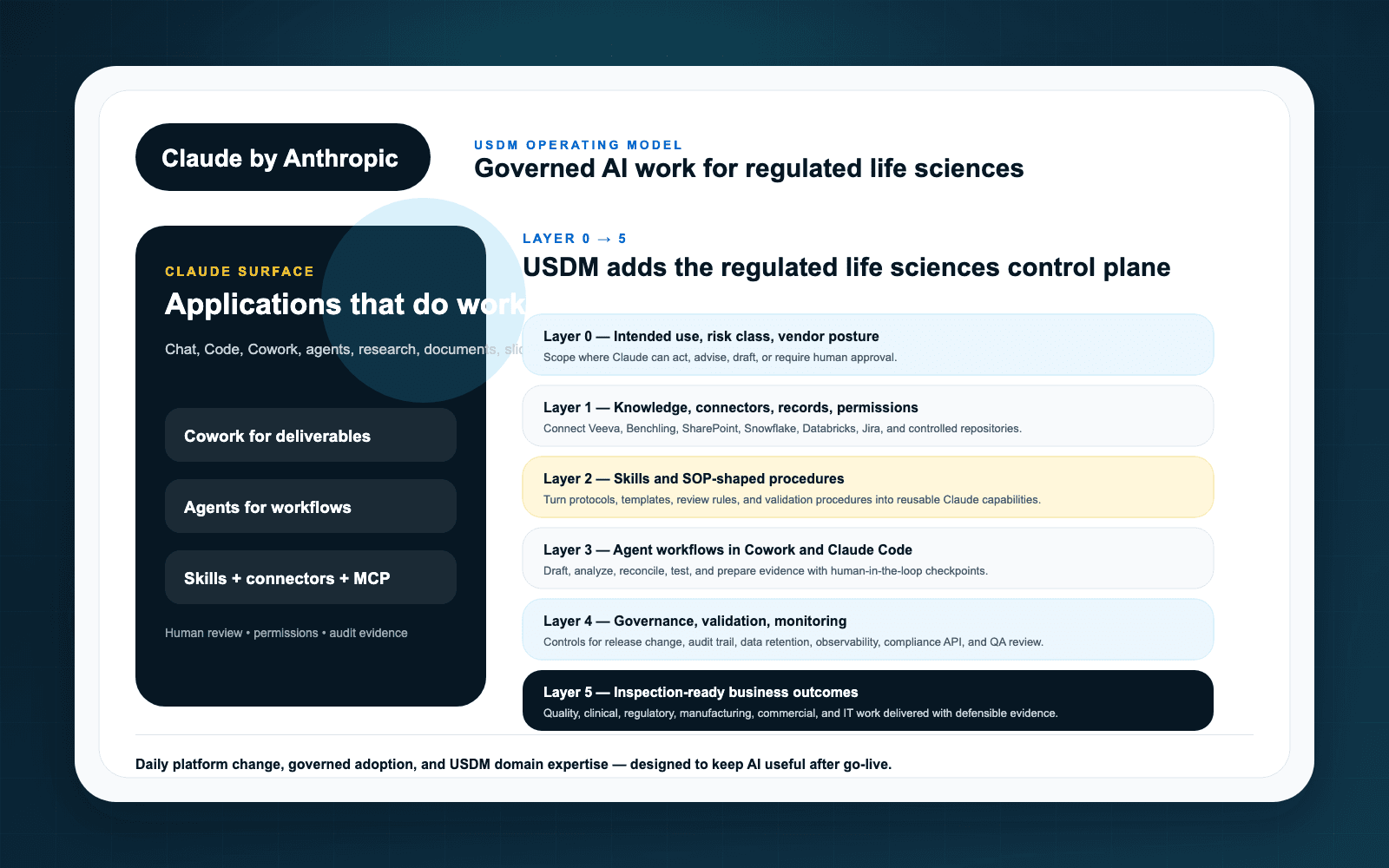

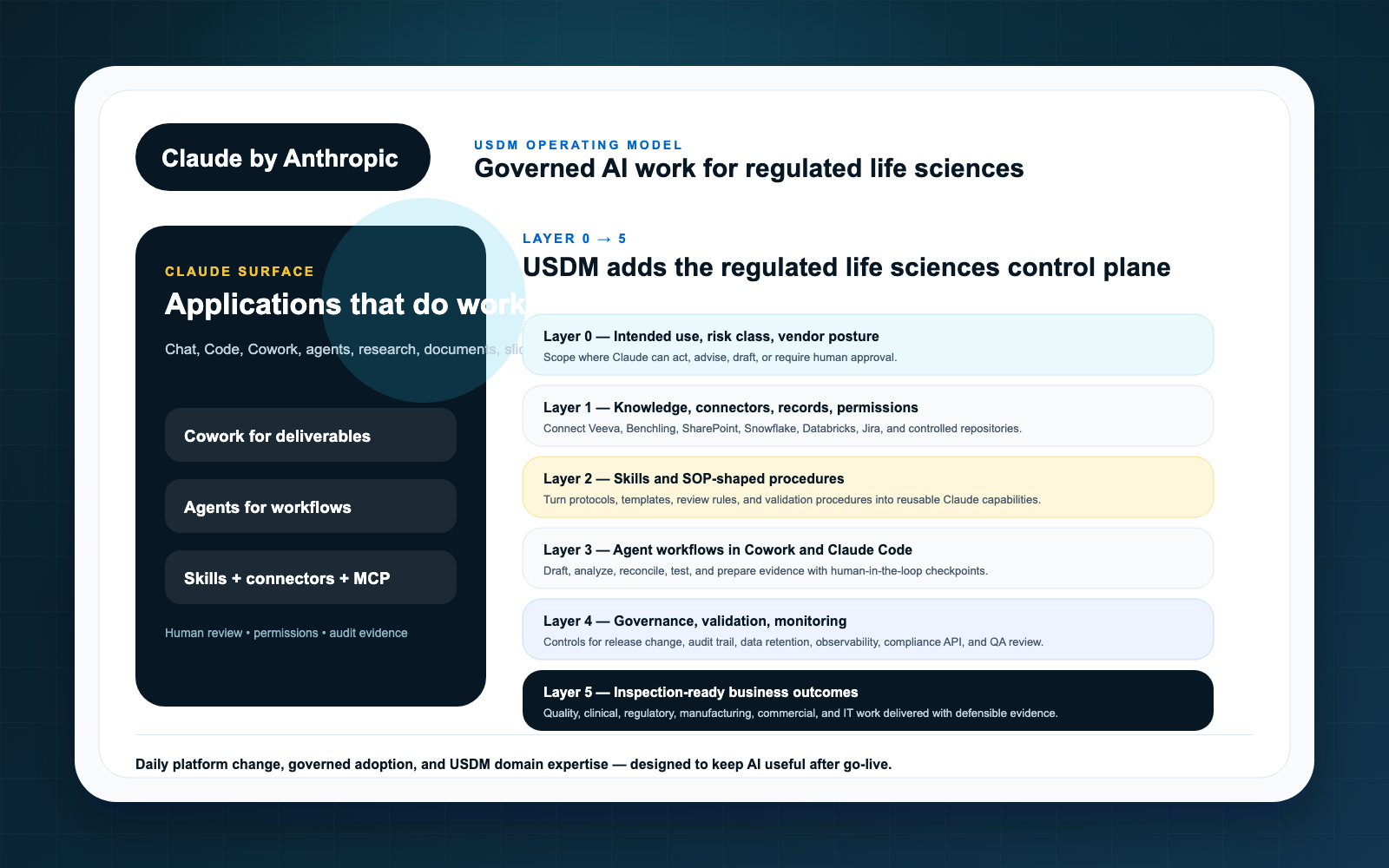

Claude adoption in life sciences should not begin with a tool rollout. It should begin with an operating model. Without one, organizations tend to accumulate disconnected pilots, inconsistent data practices, unapproved use cases, and unclear accountability. That is how AI enthusiasm becomes AI sprawl.

USDM uses a Layer 0-5 model to help regulated organizations sequence Claude adoption from strategy to execution. The model is intentionally practical: define the use case, govern the context, connect systems, package repeatable expertise, introduce agents carefully, and capture the evidence required to operate with confidence.

Layer 0: Intended use

Every serious Claude initiative needs a named intended use. “Let teams use AI” is not an intended use. A useful statement names the workflow, business owner, user population, source systems, expected outputs, review requirements, prohibited uses, and success criteria.

This layer is where organizations decide whether a use case is productivity support, business process support, GxP-adjacent, or part of a regulated workflow.

Layer 1: Governed context

Claude’s usefulness depends on context. Its risk also depends on context. Governed context means defining what content Claude can access, how permissions are inherited or enforced, what sensitive data is excluded, and which systems remain authoritative.

For life sciences, this layer often includes document classification, role design, data retention expectations, privacy boundaries, and source citation requirements.

Layer 2: Connectors and MCP

Connectors and MCP-based integrations are how Claude reaches the systems where work happens. Anthropic describes MCP as an open standard for connecting AI assistants to data sources. That makes it strategically important, but not automatically compliant.

USDM treats this layer as governed integration infrastructure. Access, scope, logging, testing, and change control must be explicit.

Layer 3: Skills

Claude Skills can package instructions and repeatable expertise. In regulated organizations, that is useful because it can reduce prompt variation. It also means skills need owners, versions, testing, and release discipline when they support important workflows.

A skill used for an SOP impact summary or inspection readiness checklist should be managed differently from an informal productivity helper.

Layer 4: Agent workflows

Agents introduce goal-directed execution across context and tools. That is where value can accelerate, but it is also where control failures become harder to detect after the fact. USDM recommends moving to agents only after the lower layers are clear enough to support bounded autonomy.

Agent workflow design should specify allowed actions, human approvals, exception handling, audit evidence, and monitoring metrics.

Layer 5: Governance and evidence

The final layer runs across all the others. Governance is not a committee at the end of the process. It is the evidence system that shows how Claude-enabled work is approved, tested, monitored, changed, and retired.

This layer includes AI policy, risk classification, validation strategy, vendor oversight, training, monitoring, incident handling, and management review.

Why the sequence matters

Organizations often want to jump straight to agents because the demos are compelling. In life sciences, the smarter path is to build upward. Intended use and context come first. Connectors and skills come next. Agents come when the workflow has enough structure to be safe, valuable, and defensible.

That is the operating model behind USDM’s Anthropic Claude for life sciences approach and the reason we recommend starting with an AI Readiness Assessment before enterprise-wide rollout.

Key takeaways

- Claude adoption needs an operating model, not a loose collection of pilots.

- USDM’s Layer 0-5 model sequences intended use, context, connectors, skills, agents, and governance evidence.

- Connectors, MCP, and skills are control layers as much as technical features.

- Agentic workflows should come after governance patterns are established, not before.