Claude is moving beyond the familiar chatbot pattern. Anthropic describes Claude Cowork as a way to bring Claude Code-style capability into knowledge work. For life sciences organizations, that shift matters because the question is no longer, “Can AI draft something useful?” The question is, “Can AI participate in regulated work without creating uncontrolled risk?”

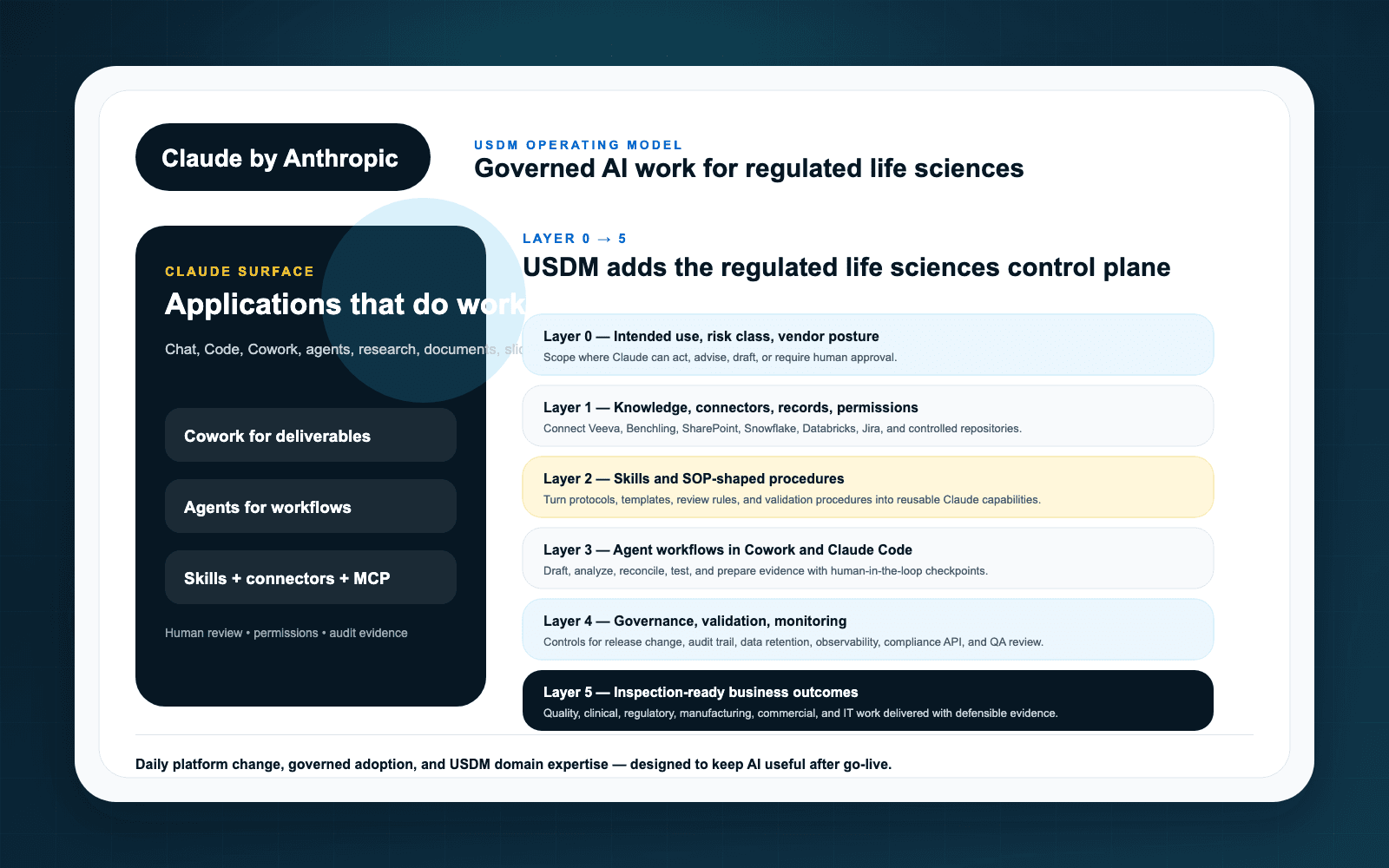

USDM’s view is simple: Claude should be treated as a governed work partner. That means the operating model comes before broad adoption. Teams need a defined intended use, approved data boundaries, human review, evidence capture, and a clear escalation path when Claude is supporting work that touches GxP, quality, regulatory, clinical, medical, or commercial operations.

Why “co-work” is different from chatbot experimentation

Early AI pilots often happen in isolated prompts: summarize this document, draft this email, create this checklist. Those experiments can be useful, but they rarely answer the operational questions regulated companies eventually face. Who approved the use case? What data can Claude access? What system of record remains authoritative? What evidence proves that humans reviewed the output before it influenced a regulated decision?

Co-work raises the bar because Claude can sit closer to real work: document review, knowledge synthesis, meeting preparation, SOP comparison, investigation support, and controlled task execution. That proximity creates value and risk at the same time.

The life sciences control model

USDM recommends evaluating Claude adoption through a controlled operating model rather than a tool-by-tool checklist. At minimum, that model should include:

- Intended use definition — name the workflow, user role, source systems, expected outputs, and prohibited uses.

- Data boundary design — decide what Claude may see, what it must never see, and how permissions are enforced.

- Human accountability — define who reviews, approves, rejects, or escalates Claude-supported work.

- Evidence capture — retain the records needed to explain prompts, sources, outputs, and review decisions where appropriate.

- Change control — monitor material changes to connectors, skills, prompts, models, and workflows.

This is also why USDM built the Anthropic partner page around governed co-work, connectors, skills, agents, validation, and evidence rather than around a generic AI productivity claim.

Where Claude Cowork can fit

Claude is a strong candidate for work that depends on reading, synthesis, drafting, comparison, and reasoning across trusted context. In life sciences, practical candidate areas include:

- Quality document review and SOP comparison

- Inspection readiness preparation and evidence retrieval

- Regulatory intelligence synthesis and submission planning support

- Clinical operations knowledge search and controlled briefing development

- Medical affairs content preparation with human medical/legal/regulatory review

- Commercial enablement content grounded in approved materials

The important qualifier: these are candidate workflows, not automatic approvals. Each one needs risk classification, validation strategy, access governance, and review procedures appropriate to the intended use.

How USDM helps

USDM helps life sciences organizations translate Claude’s product surface into a practical adoption program. That includes AI readiness assessment, use case prioritization, governance design, validation planning, workflow mapping, evidence expectations, and adoption support.

For organizations that want to move quickly without creating AI sprawl, the starting point is a small set of high-value use cases with strong governance. A well-designed pilot should create reusable patterns for access, review, monitoring, and change control.

Key takeaways

- Claude Cowork should be governed as a work partner, not treated as a side-channel chatbot.

- Life sciences adoption depends on intended use, data boundaries, human accountability, evidence, and change control.

- USDM links Claude adoption to governed workflows through its Anthropic implementation and governance offering.

- Start with an AI Readiness Assessment before scaling Claude across regulated teams.