Agents are where AI strategy becomes operational. A chatbot answers. A workflow assistant helps. An agent can pursue a goal across tools, context, and steps. That makes agents powerful, but it also makes them uncomfortable for regulated organizations that need control, evidence, and accountability.

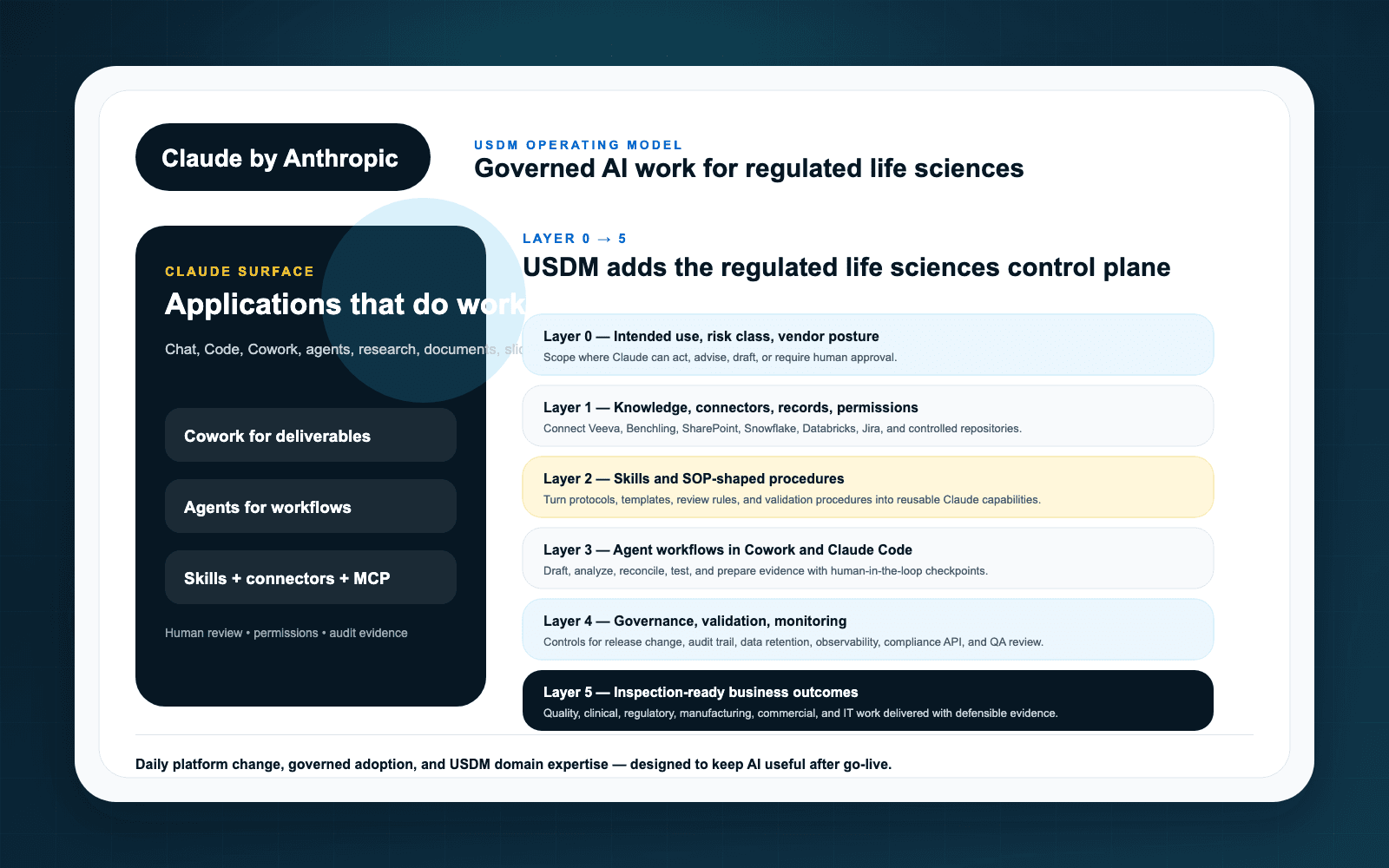

For life sciences teams evaluating Claude, the answer is not to ban agentic workflows until every theoretical risk is solved. The answer is to govern autonomy in layers: define the intended use, constrain the tools, require human review where appropriate, validate the workflow, monitor performance, and keep change under control.

Why GxP workflows require a different standard

GxP work is not just knowledge work. It is accountable work. When AI supports quality, validation, regulatory, clinical, manufacturing, or safety processes, organizations need to show that the process remains controlled and that humans retain responsibility for regulated decisions.

Claude may assist with analysis, synthesis, drafting, task coordination, and tool use. But the regulated process must still define what the output means, who reviews it, when it can be used, and what evidence is retained.

Governance without paralysis

Many organizations make one of two mistakes. They either allow AI pilots to spread without governance, or they create a review model so heavy that useful experimentation stops. Neither works.

USDM recommends tiering Claude-supported workflows by risk:

- Low-risk productivity: drafting, summarization, brainstorming, and non-regulated internal support with clear data boundaries.

- Controlled business support: approved content preparation, knowledge retrieval, and workflow assistance with documented review.

- GxP-adjacent support: analysis or drafting that informs regulated work but requires SME approval before use.

- GxP workflow execution: agentic steps inside regulated processes requiring validation, monitoring, audit evidence, and formal change control.

This tiered model lets teams move faster where risk is low and apply more control where the workflow justifies it.

What “bounded autonomy” looks like

A Claude-supported agent in a GxP workflow should not receive broad, permanent access and a vague goal. A defensible design is narrower:

- Use approved connectors and repositories only.

- Limit actions to specific tasks, records, or workflow steps.

- Require human approval before regulated decisions, submissions, quality conclusions, or system-of-record changes.

- Log prompts, sources, outputs, actions, and review decisions where required.

- Monitor failures, hallucinations, access exceptions, rejected outputs, and drift in workflow behavior.

Examples worth evaluating

Good early candidates are workflows where Claude can reduce manual effort without replacing accountable judgment. Examples include inspection readiness evidence collection, SOP change impact summaries, deviation investigation preparation, validation test script drafting, regulatory intelligence briefing, and controlled content comparison.

Each candidate should be evaluated through the USDM Anthropic governance model and the broader agentic team adoption pattern: humans remain accountable, agents operate within role-specific guardrails, and evidence is designed into the workflow rather than bolted on later.

How USDM helps

USDM helps organizations identify where Claude agents belong, where they do not, and what controls are required for each use case. The work typically includes use case assessment, risk classification, workflow design, validation planning, connector governance, skill governance, monitoring design, and adoption support.

Key takeaways

- Claude-supported agents can support GxP workflows when autonomy is bounded and evidence is designed up front.

- Risk tiering prevents both uncontrolled AI sprawl and innovation-killing bureaucracy.

- Human review, validation, monitoring, and change control remain central to regulated AI adoption.

- Start with a USDM AI Readiness Assessment before scaling agents into regulated workflows.